Demystifying “Open-Source” Generative AI: Not All Open Is Truly Open

Hey everyone, it’s metamood here, your go-to for unpacking tech chaos into simple, real-talk vibes. Whether you’re just dipping your toes into AI or you’ve been geeking out for years, I love making this stuff feel approachable. Today, we’re chatting about a super timely research paper from the ICIS 2025 conference: “Making Sense of Open-Source Generative AI Models: A Taxonomy and Archetypes” by Lorenzo Diaferia, Leonardo Maria De Rossi, and Aakanksha Gaur from SDA Bocconi School of Management.

Generative AI, the magic behind tools that whip up text, images, or code, is everywhere now. Around 71% of big companies are using it in at least one part of their business. But building these powerful models from scratch? That’s insanely expensive, needing massive computers, huge datasets, and expert teams. Most companies just grab ready-made ones from providers like OpenAI, Anthropic, or Google.

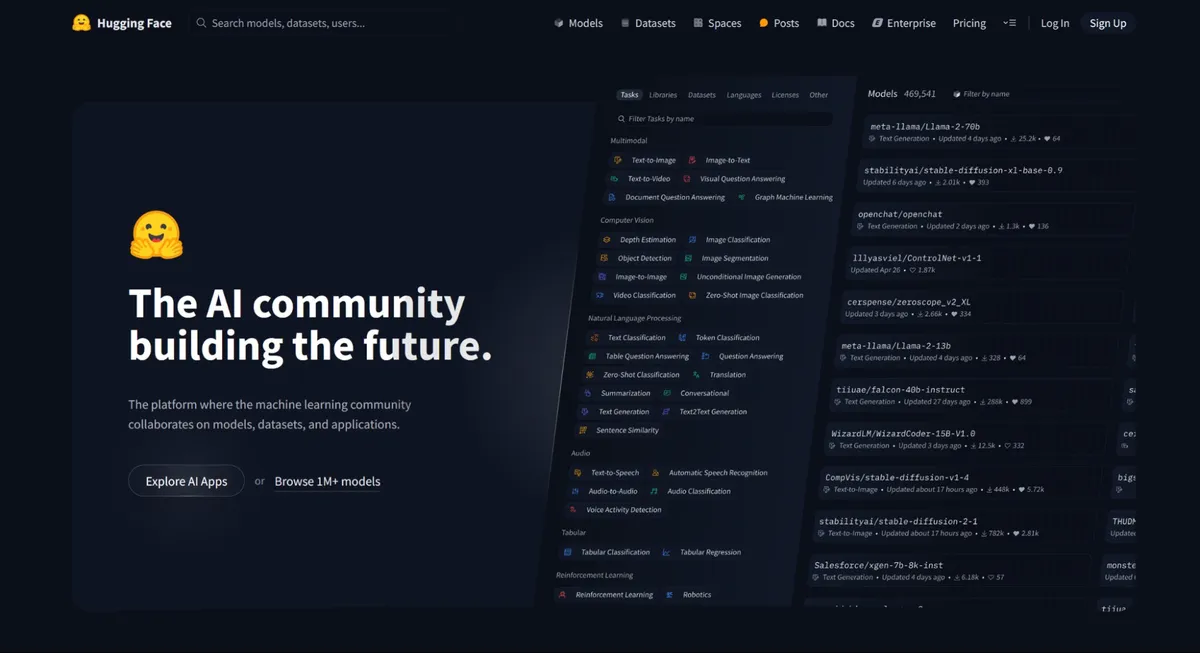

That’s where “open-source” generative AI comes in. It promises more transparency and freedom to tweak things yourself. Platforms like Hugging Face are booming with over two million models shared by the community. Companies are leaning in hard because open-source options can spark faster innovation and cut costs.

But hold up – the “open-source” label in AI isn’t always what it seems. Traditional open-source software means full access to the code, free tweaks, and sharing. AI models are different: they include code, but also “weights” (the trained brain), training data, and more. Many so-called open models hide key parts or add restrictions. This is called “open-washing” – marketing hype that hides limits.

Examples? Meta’s Llama series gets called open, but often restricts commercial use or keeps training data secret. That can trap companies in dependencies, hide biases, or create legal risks if the AI outputs harmful stuff.

The researchers saw this confusion and built a clear way to sort it out.

A Simple Way to Measure “Openness”

They created a taxonomy – basically a categorization system – with three main areas:

- Technical Openness: What parts do you actually get? Full code, weights, training data for true replication? Or just scraps?

- Governance: Who’s running the show? A big company controlling everything, or an open community collaborating?

- Licensing and Usage Rights: The rules. Can you freely modify and sell derivatives (like permissive MIT license)? Or must you share changes back (copyleft)? Some add ethics rules, like no harmful uses. This breaks down into 8 dimensions and 18 traits, so you can score any model fairly. No more vague promises!

Real-World Check: 248 Models, 6 Common Types

They didn’t just theorize. The team analyzed 248 popular open-source generative AI models from Hugging Face and other sources (2023-2025 era). Using cluster analysis, they found six archetypes – typical patterns:

- Corporate Restrictive Models: From big companies, partial sharing but heavy limits on use and changes. Good for basic stuff, but no full freedom.

- Open-Science Community Models: Built by decentralized groups (researchers, enthusiasts). Almost everything shared openly, no strings – great for pure innovation.

- Plus four hybrids, like startup partial-opens or fully transparent but centrally governed ones. (Think Stability AI’s Stable Diffusion as a classic partial example.) They validated this with AI experts in interviews and a workshop – everyone agreed it’s practical and spot-on.

Why This Matters to All of Us

This paper cuts through the marketing fog and gives real tools to evaluate AI models. For businesses, pick ones that avoid lock-in, allow audits for fairness, and support custom tweaks.

For everyday folks, true open AI could make powerful tech accessible to everyone, not just giants. Demand real openness – it pushes the whole field forward. If you’re curious, head to Hugging Face and play with some models yourself.

Have you spotted open-washing in AI tools you’ve tried? What’s your take? Share in the comments – love hearing your moods on this. Stay curious, stay meta!

Inspired by the full paper – grab it from AIS eLibrary for all the details. Stats updated with latest insights as of late 2025.

References

[1] Diaferia, Lorenzo; De Rossi, Leonardo Maria; Gaur, Aakanksha. “Making Sense of Open-Source Generative AI Models: A Taxonomy and Archetypes” (2025). ICIS 2025 Proceedings. https://aisel.aisnet.org/icis2025/gen_ai/gen_ai/8

[2] Huggingface pic: https://zapier.com/blog/hugging-face/

[3] Meta Llama pic: https://hyperight.com/meta-unveils-llama-3-1-a-giant-leap-in-open-source-ai/